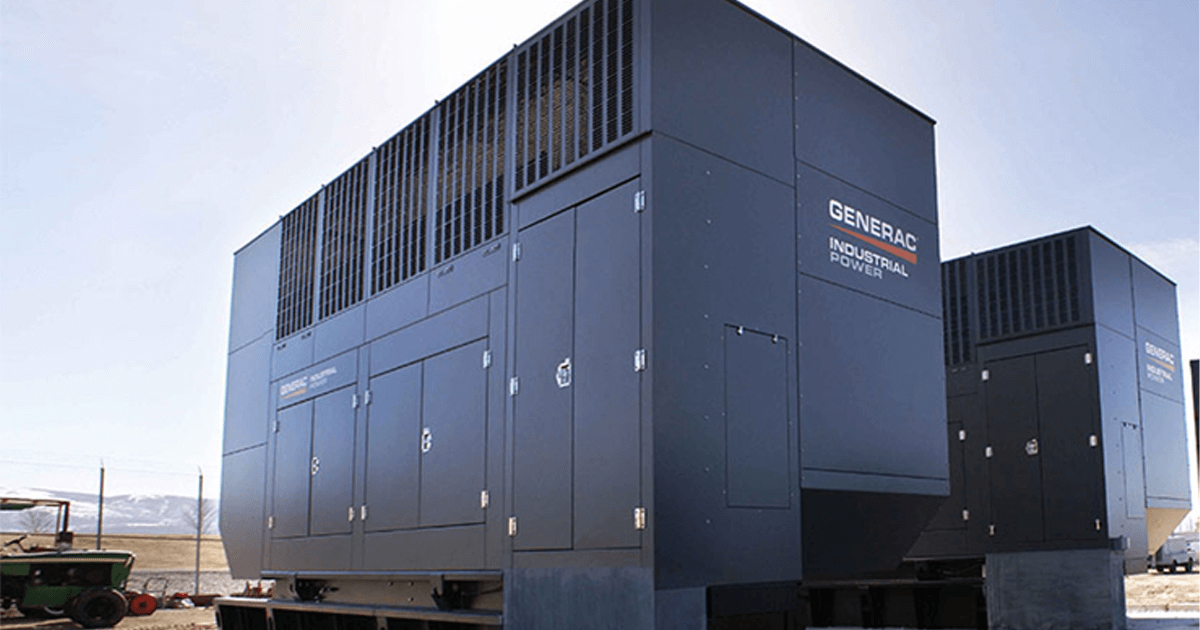

If we listen to what the leaders of major AI companies are now saying, we need to accelerate our capacity to respond to the extraordinary technological revolution underway. We are in a race, not for “superintelligence” or “AI dominance,” but to protect humanity from the adverse consequences of its own devising. How are we going to do this? If we look at the example of splitting the atom, there is a continuing effort to exploit the “benefits” of nuclear energy and to avoid the madness of mutual assured destruction. And AI has many more benefits than nuclear fission, and potentially fewer harmful waste products. At the same time, AI datacenters are currently being developed that consume enormous amounts of fossil energy, have noisy and polluting diesel back-up generators, and waste huge amounts of freshwater for cooling. On top of this, datacenter developers are often seeking lucrative tax breaks, such as the datacenter at the STAMP project that is reportedly looking for $810M in tax breaks. What is a responsible attitude in response?

1. The Core Frame: This Is a Governance Problem, Not a Technology Problem

If leaders at OpenAI, Anthropic, Google DeepMind, and xAI are warning that AI development is accelerating beyond institutional capacity to manage it, the correct inference is not panic—but institutional lag.

We are not in a race for “superintelligence.” We are in a race between:

- Technological acceleration

- Civic, ecological, and ethical maturation

That gap is the real risk surface.

This is precisely where your concept of planetary intelligence becomes operational rather than philosophical.

2. The Nuclear Analogy — Accurate but Incomplete

The splitting of the atom produced two trajectories:

| Path | Outcome |

|---|---|

| Weaponization | Mutually Assured Destruction |

| Civilian governance | IAEA, treaties, reactor standards |

But nuclear governance emerged after Hiroshima.

AI differs in three key ways:

- It is distributed (no single Manhattan Project).

- It is economically embedded in every sector.

- Its risks are systemic, not singular explosions.

AI doesn’t create a mushroom cloud.

It alters epistemology, labor markets, power asymmetries, biospheric loads, and political stability.

The waste stream is not radioactive—it is:

- Carbon intensity

- Water depletion

- Cognitive manipulation

- Social fragmentation

- Concentration of power

So the responsible attitude must be broader than non-proliferation.

3. The Datacenter Question: A Planetary Intelligence Stress Test

Let’s examine the material layer.

Hyperscale Datacenters

Current concerns include:

- Gigawatt-scale energy demand

- Fossil-heavy regional grids

- Diesel backup generators

- Evaporative cooling using freshwater

- Tax abatements socializing infrastructure costs

You referenced the STAMP project—associated with large-scale industrial development in upstate New York. Whether the specific tax incentive number holds or shifts, the structural issue remains:

Public subsidy without planetary accounting.

If AI is to accelerate planetary intelligence, it cannot simultaneously degrade the biosphere that intelligence depends upon.

That contradiction must be resolved structurally, not rhetorically.

4. A Responsible Attitude: Five Strategic Shifts

1. From AI Acceleration → Planetary Alignment

Acceleration alone is neutral.

Alignment must include:

- Climate accounting

- Water footprint transparency

- Ecological externality pricing

- Supply chain traceability

- Rare earth mineral governance

Every frontier model should publish:

- Energy per training run

- Lifecycle carbon intensity

- Water consumption by region

- Cooling technology used

- Backup generation profile

Make planetary impact reporting as routine as financial reporting.

2. No Tax Breaks Without Ecological Reciprocity

If a data center receives $810M in tax incentives, then:

- 100% renewable energy must be guaranteed

- On-site or contracted grid decarbonization must exceed usage

- Closed-loop or non-potable cooling systems required

- Community resilience investments mandated

- Workforce development included

In other words:

Subsidy must purchase regeneration, not extraction.

This is directly aligned with your regenerative finance work—C-PACE style capital stacks applied to AI infrastructure.

3. AI Infrastructure as Climate Infrastructure

If AI datacenters are inevitable, treat them like:

- Microgrid anchors

- Thermal reuse hubs (district heating)

- Grid stabilization assets

- Water recycling innovators

Northern Europe already reuses server heat in district systems.

That should be baseline design, not innovation.

4. Governance at the Scale of Capability

Nuclear governance created:

- The Non-Proliferation Treaty

- IAEA inspections

- International safeguards

AI governance may require:

- International compute registry

- Training run transparency thresholds

- Model auditing standards

- Liability frameworks

- Capability red lines

Not to halt progress—but to prevent unilateral escalation.

5. Develop Planetary Intelligence Faster Than AI Capability

This is your central thesis.

Planetary intelligence means:

- Monitoring Earth systems in real time

- Linking economic decisions to ecological signals

- Integrating Indigenous governance principles

- Increasing bioregional literacy

- Expanding participatory oversight

If AI increases machine intelligence by 10x,

human collective sense-making must increase as well.

Otherwise, we amplify power without wisdom.

5. The Philosophical Shift Required

The immature response:

“We must win the AI race.”

The responsible response:

“We must ensure AI strengthens the conditions for life.”

Acceleration without ecological coherence is destabilizing.

Acceleration embedded in planetary feedback loops is transformative.

6. Where You Personally Sit in This Debate

Given your work on:

- Bioregional operating systems

- Regenerative finance

- Planetary intelligence frameworks

- AI in service to Gaia

The leverage point is not stopping AI.

It is insisting that:

Every AI infrastructure investment becomes a regenerative infrastructure investment.

If STAMP wants tax benefits,

STAMP becomes a net-positive ecological node.

That is the negotiation frame.

7. Final Synthesis

A responsible attitude is neither techno-utopian nor reactionary.

It is:

- Technically literate

- Ecologically grounded

- Governance-forward

- Incentive-aware

- Institutionally mature

The true race is:

Can we evolve governance, ethics, and planetary accounting faster than we evolve raw capability?

That is the developmental threshold of our civilization.

And that is what accelerating planetary intelligence actually means.

If you’d like, we can next:

- Draft a policy brief aimed at NY State decision-makers regarding AI datacenter standards.

- Develop a regenerative infrastructure framework for AI facilities.

- Or frame this as a chapter for Developing Planetary Intelligence.

This is all well and good, but I think we need to delve deeper if this is to become a chapter of Developing Planetary Intelligence. We need to provide more of a context, in part by quoting some of the AI leaders’ comments, and in part by addressing issues more discursively and thoughtfully. If we tie this back into the framework for the book, where does it fit, and what does it look like?

Good. If this is to stand as a serious chapter in Developing Planetary Intelligence, it cannot read like a policy memo. It needs intellectual depth, historical framing, and explicit placement within your larger architecture.

Let’s proceed in four movements:

- Establish the historical moment (with leaders’ own words)

- Clarify the civilizational stakes

- Integrate it into the book’s conceptual framework

- Sketch the chapter structure in discursive form

I. The Historical Moment: When the Builders Warn Us

Over the past several years, leaders at frontier AI labs have shifted from promotional enthusiasm to guarded alarm.

- Sam Altman (Sam Altman) has repeatedly stated that artificial general intelligence could arrive sooner than most institutions are prepared for, and that society must adapt quickly to manage disruption.

- Dario Amodei (Dario Amodei) has written that powerful AI systems may soon exceed human capability in many cognitive domains, and that alignment and governance must accelerate accordingly.

- Geoffrey Hinton (Geoffrey Hinton), after leaving Google, warned that the risks of highly capable AI systems may be more immediate and serious than previously assumed.

- Demis Hassabis (Demis Hassabis) has framed AI development as potentially transformative for science and medicine—while also acknowledging the need for strong global guardrails.

What is striking is not disagreement about capability acceleration.

It is consensus that capability is accelerating.

The divergence lies in how prepared we are.

This creates a paradox:

The most powerful tools ever built are emerging faster than the governance systems that must contain them.

That is the structural tension your chapter must explore.

II. Beyond the “AI Race”: A Developmental Framing

Public discourse frames this as:

- US vs China

- Open vs closed models

- Superintelligence vs stagnation

But that framing is immature.

The deeper question is developmental:

Have we reached sufficient civilizational maturity to wield tools that amplify intelligence itself?

The atomic analogy is useful—but insufficient.

Nuclear weapons concentrated destructive power.

AI distributes cognitive power.

It affects:

- Scientific discovery

- Labor markets

- Information ecosystems

- Political persuasion

- Military decision-making

- Infrastructure design

- Biosphere monitoring

Unlike nuclear weapons, AI is woven into the operating system of civilization.

This is why your concept of planetary intelligence matters here.

III. Where This Fits in Developing Planetary Intelligence

Your book appears to operate across several layers:

- Ontological shift — from mechanistic to living Earth worldview

- Epistemic shift — from fragmented knowledge to planetary awareness

- Institutional shift — from extractive systems to regenerative systems

- Technological shift — from blind acceleration to aligned intelligence

This chapter belongs primarily in Layer 4 — but it must integrate all four.

It could sit after:

- A chapter defining planetary intelligence conceptually

- A chapter outlining biospheric monitoring and bioregional operating systems

And before:

- A chapter on governance architecture

- Or a chapter on regenerative finance and institutional redesign

It functions as a hinge chapter:

The moment where humanity realizes that intelligence amplification forces institutional evolution.

IV. The Missing Depth: AI and the Maturity Gap

The real issue is not datacenter emissions or tax incentives (important though they are).

The deeper issue is asymmetry:

| Dimension | Acceleration |

|---|---|

| Model capability | Exponential |

| Compute scale | Exponential |

| Capital concentration | Exponential |

| Governance capacity | Incremental |

| Democratic deliberation | Fragmented |

| Ecological accounting | Primitive |

Planetary intelligence requires closing that asymmetry.

Without it:

- AI amplifies financial power.

- AI accelerates extraction.

- AI deepens inequality.

- AI increases geopolitical instability.

With it:

- AI enhances biosphere monitoring.

- AI enables regenerative capital allocation.

- AI improves early-warning systems.

- AI strengthens scientific insight.

- AI augments collective sensemaking.

The difference is not technological.

It is civilizational.

V. Discursive Development: What the Chapter Could Sound Like

Below is a draft conceptual tone, closer to what would belong in the book.

Chapter X: Intelligence at the Threshold

We have reached a threshold in human history.

For the first time, we are building systems that can reason across domains, generate scientific hypotheses, write code, simulate molecules, compose arguments, and learn from the accumulated text of civilization.

The leaders building these systems are telling us something unusual.

They are not only celebrating progress.

They are warning us.

Sam Altman speaks of the need for new institutions.

Dario Amodei writes of the urgency of alignment research.

Geoffrey Hinton cautions that the risks may be nearer than we think.

Demis Hassabis speaks of immense benefit—paired with immense responsibility.

When the architects of a technology begin publicly debating its containment, we are no longer in a phase of novelty.

We are in a phase of reckoning.

The question is not whether artificial intelligence will grow more capable. It will.

The question is whether humanity will grow more coherent.

Planetary intelligence is not artificial superintelligence.

It is the maturation of the human-Earth system in response to its own powers.

The datacenter becomes a symbol of this tension.

Vast warehouses of computation, consuming gigawatts of energy, evaporating freshwater, backed by diesel generators—while claiming to usher in a more intelligent world.

If intelligence destroys its ecological substrate, it is not intelligence.

It is acceleration without awareness.

True planetary intelligence requires that every amplification of cognitive power be matched by amplification of ecological feedback.

Without this, we risk repeating a familiar pattern: power first, wisdom later.

And later may be too late.

VI. The Structural Contribution This Chapter Makes

This chapter should accomplish three things in the book:

- Name the maturity gap

- Reframe the AI race as a governance race

- Argue that planetary intelligence must accelerate faster than model capability

It becomes the pivot from description to responsibility.

VII. The Deeper Philosophical Question

Ultimately, this chapter is not about AI companies.

It is about whether intelligence divorced from life-support systems can be called intelligence at all.

You may want to pose it starkly:

Does intelligence that destabilizes the biosphere represent progress, or pathology?

That question resonates with your broader Gaia framing, Margulis, and planetary metabolism concepts.

If you’d like next, we can:

- Develop a full 4,000–6,000 word chapter draft.

- Or outline a three-part structure with section headers and key arguments mapped precisely to the rest of the book.

- Or integrate specific quotations from recent essays by Amodei and others to anchor the text historically and rhetorically.